Dark Factory

The proof surface behind Explore

Explore is the product. Dark Factory is the operating model and proof surface behind it: humans scope the job, agents carry more of the execution, and visible evidence decides whether the work should be trusted.

This page is for the delivery proof: scoped intent, validation, run logs, and live status surfaces that strengthen trust in the product without replacing the product story.

Want the product story first? Read /about. Ready to try Explore? Open /agent-setup.

Scoped by humans

The job, checks, and boundaries are defined up front.

Executed by agents

Agents carry the implementation work inside that scope.

Trusted through evidence

Run logs, verification, and status surfaces show what happened.

How to read this page

Start with Explore as the product, then use this page to inspect how it is shipped: a human scopes the change, agents execute it, and the repository keeps the proof attached.

The goal is faster trust in shipped work and a clearer product story, not theory about automation.

Visible proof, not just claims

A real delivery trail

The strongest claim on this page is simple: the repo exposes the delivery trail around the code, not just the code itself.

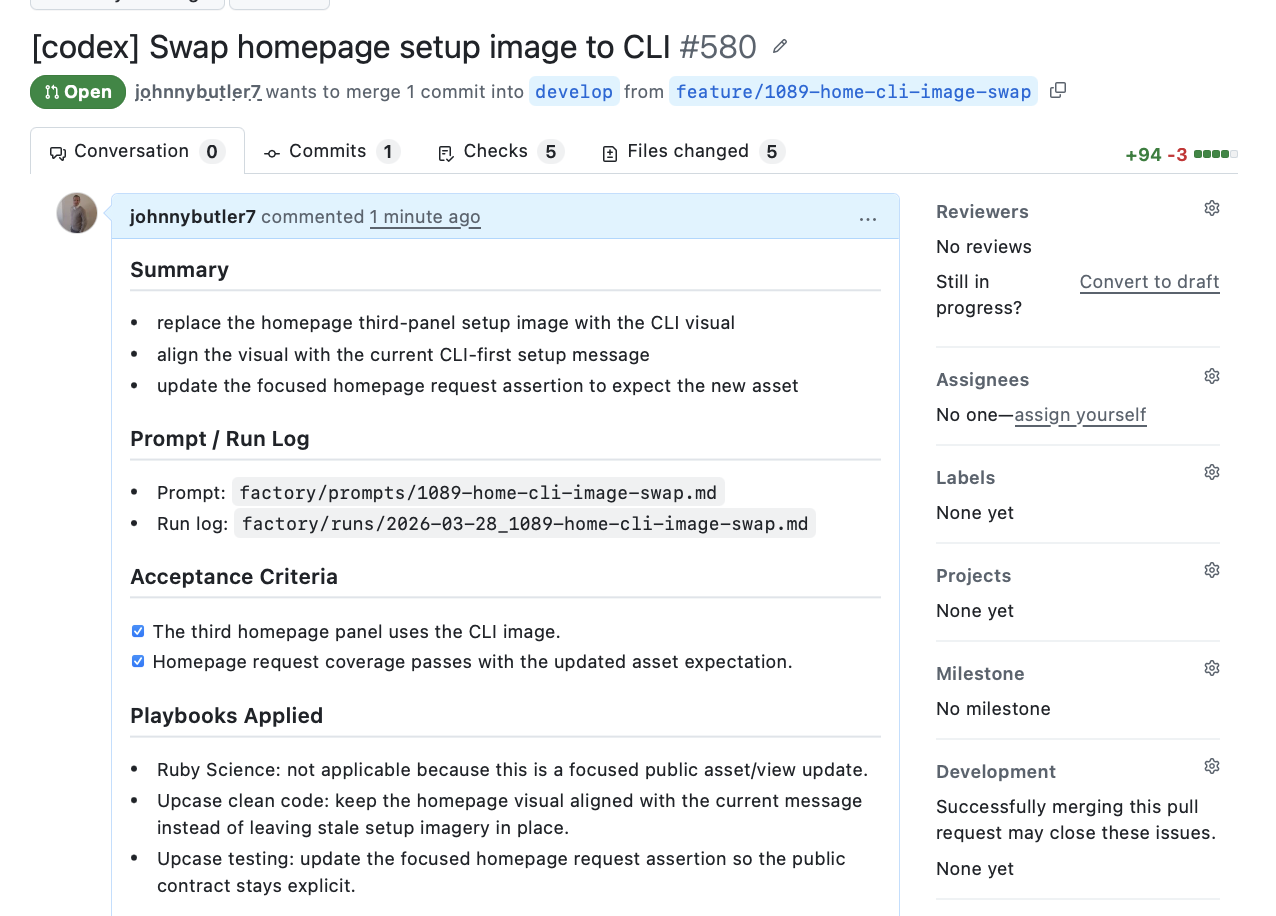

PR trail

Each substantive change carries its scope and checks with it.

Prompt, acceptance criteria, and run log stay attached to the PR.

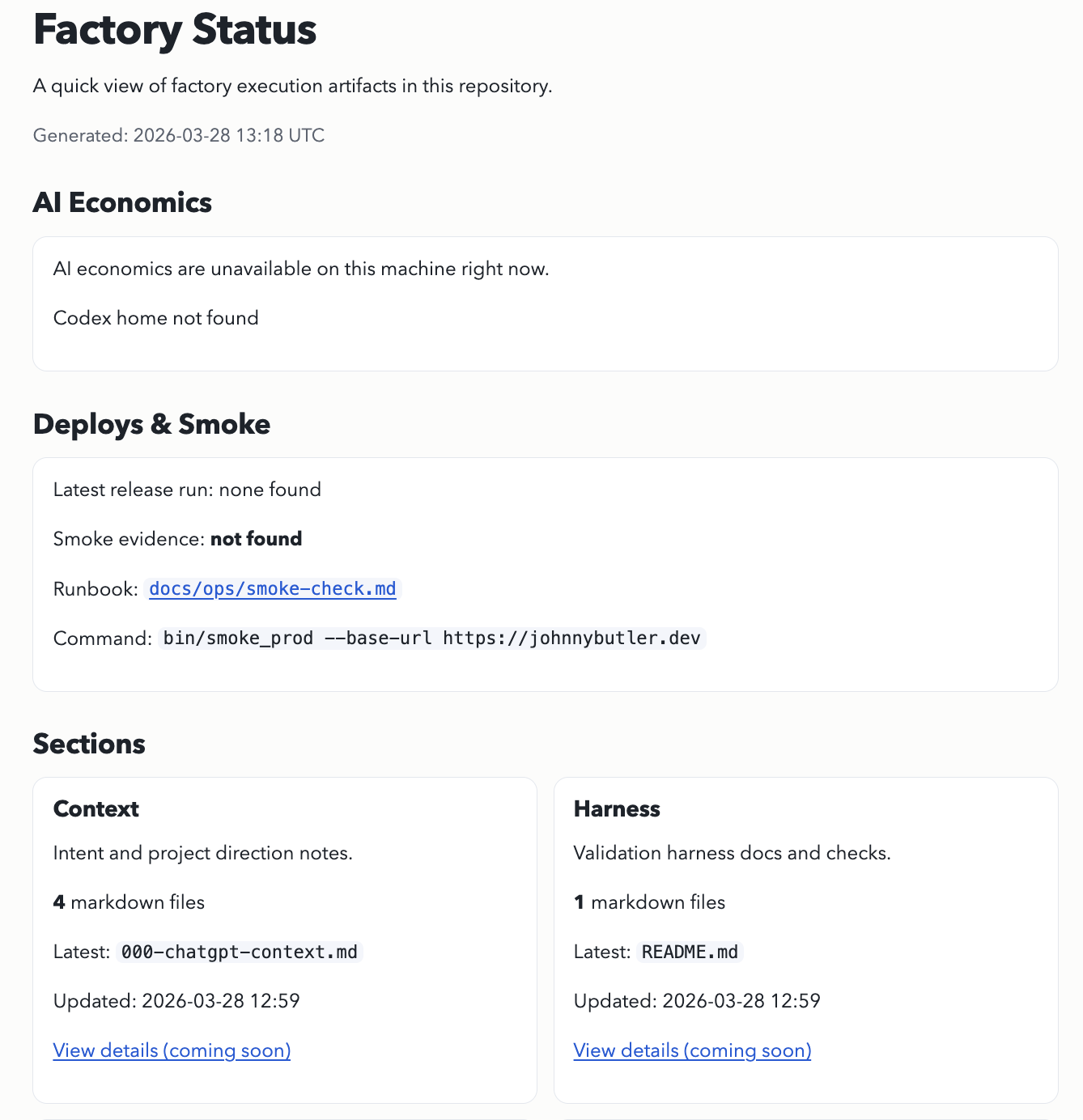

Factory visibility

Status surfaces stay live and inspectable inside the product repo.

Operational evidence is exposed as a working surface, not an afterthought.

Operating model

What Dark Factory Means Here

In practical terms, humans define the job, the boundaries, and what done means. Agents carry more of the implementation work inside those boundaries and iterate until the harness is green.

The point is not magic and it is not the removal of judgment. It is moving judgment up the stack, then making trust depend on visible validation and artefacts instead of diff heroics.

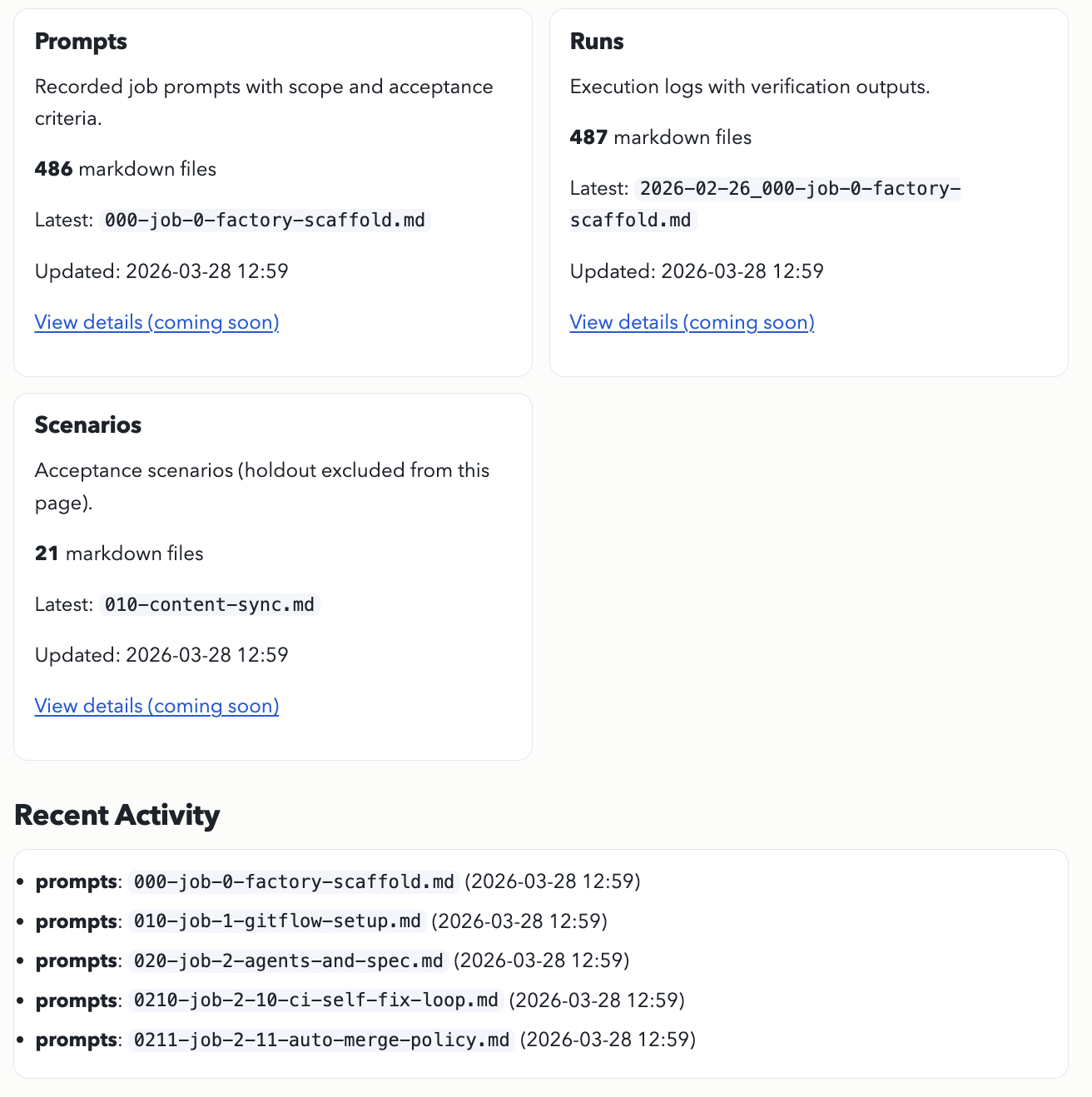

Supporting evidence

More visible artefacts

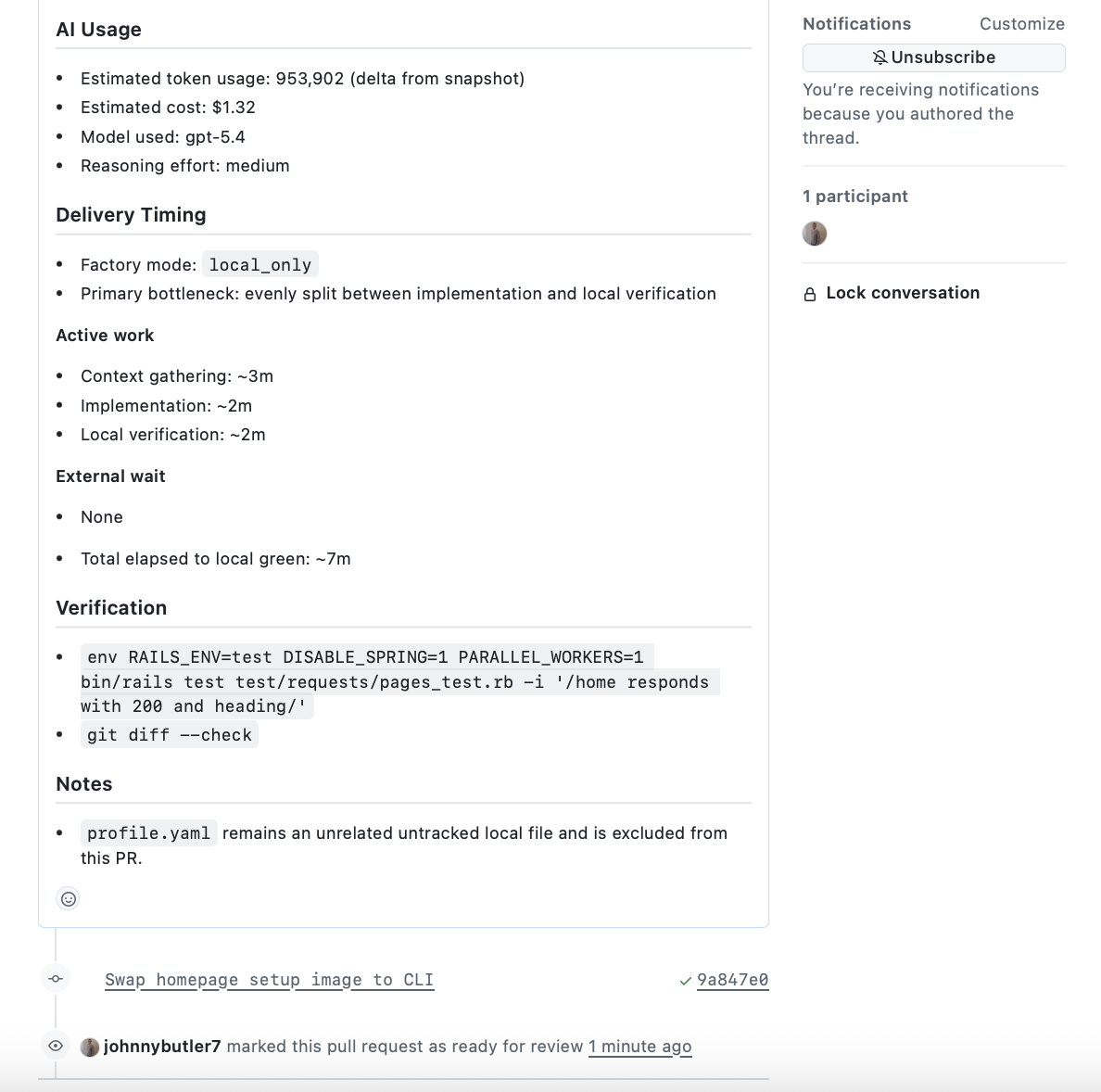

Verification

Usage, timing, and checks stay with the slice.

Execution evidence includes the key checks, timing, and operating signals behind the result.

Artefact index

The repository exposes the delivery history around the work.

The workflow leaves a visible trail that other people can inspect without guessing.

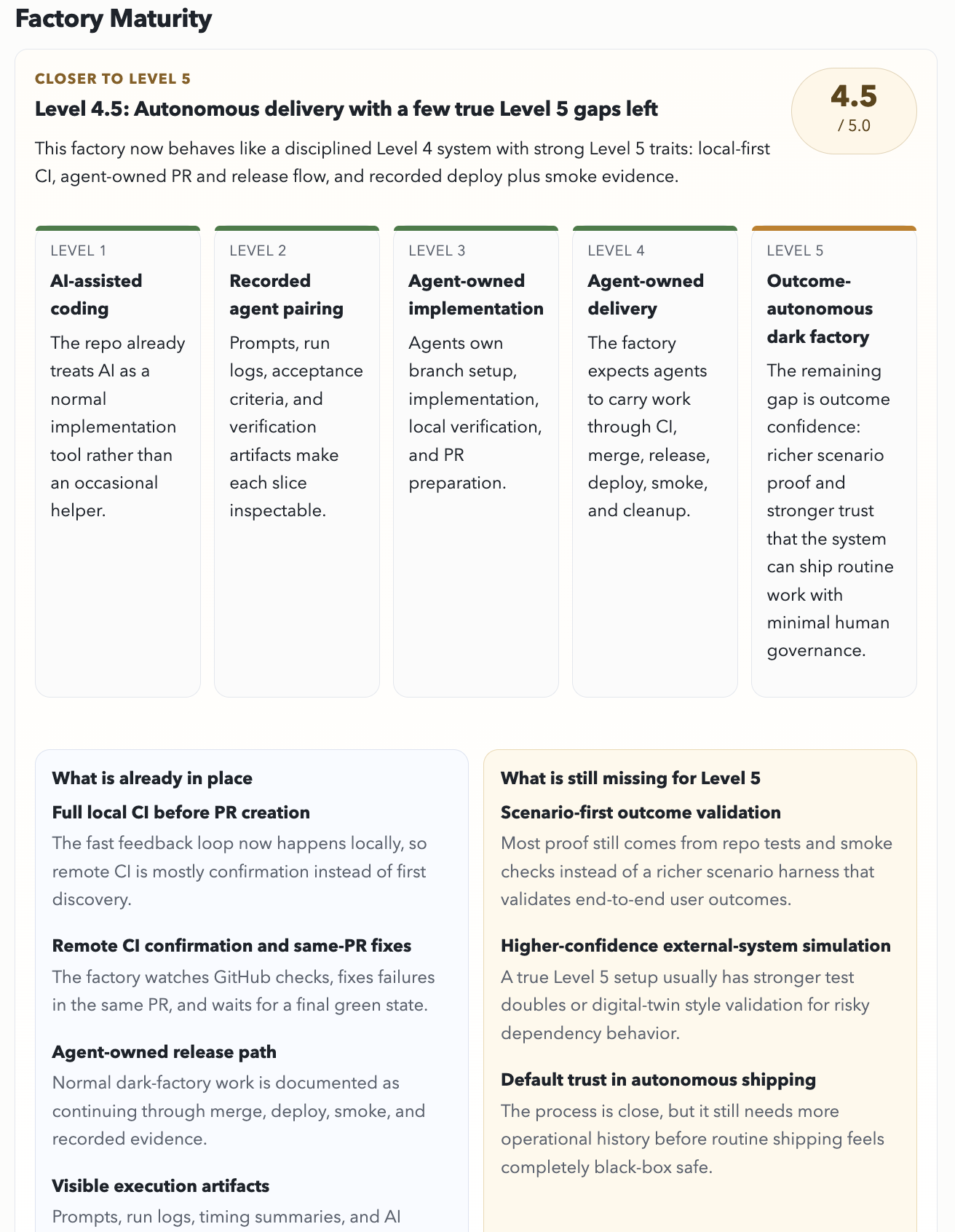

Maturity view

The current factory level is public, inspectable, and incomplete on purpose.

The live maturity panel shows what is already true and what still has to be earned.

Why this matters

Trust should come from evidence, not reconstruction.

In too many teams, the pull request becomes the first place a change is fully explained. That is too late. A better delivery model makes the work legible before implementation starts and keeps the proof attached as it moves toward production.

That gets stronger when the product is agent-friendly too: clearer state, grounded surfaces, and public pages like the Agents page that are readable by both people and tools.

Category framing

Why this category is emerging now

As AI increases software output, teams are starting to feel new bottlenecks in review, trust, governance, and architectural consistency. Some tools attack symptoms like PR bottlenecks, drift detection, or AI governance in isolation. Dark Factory is aimed at the operating layer underneath them: scoped intent, governed execution, validation evidence, and reusable operating knowledge.

The problem is no longer generation alone. It is shipping change safely, repeatedly, and with enough context to trust what happens next.

Next steps